Safety cases don’t usually fail where people expect

When people hear “safety case failure,” they tend to picture something pretty straightforward.

A missing requirement

An incorrect assumption

A bad calculation

A hazard that was overlooked

And to be fair, those things do happen.

But if you’ve spent any real time around complex systems, you start to notice something different.

That’s usually not how things actually go wrong.

Most safety cases don’t fail because something obvious is missing.

They fail in a much quieter way.

Everything looks right on its own… but something breaks when it all comes together.

What a safety case is really assuming

At its core, a safety case is trying to answer a simple question:

“Is this system safe enough to operate in the real world?”

To get there, we build it up from pieces:

hazard identification (FHA)

failure analysis (FTA, FMEA, and so on)

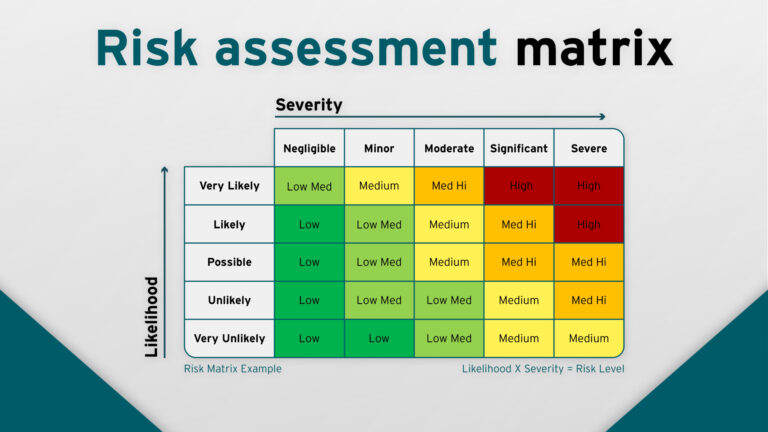

probability and severity classifications

mitigations like redundancy, alerts, procedures

evidence from testing and verification

Each of these is usually handled in a clean, structured way.

You take one problem at a time, define it clearly, and solve it.

And that works… up to a point.

Because that whole approach quietly assumes something:

that the system behaves like a collection of separate pieces.

The hidden assumption: clean separation of concerns

Most safety cases are built on this idea, whether explicitly or not:

You can analyse parts of the system independently, and then safely combine the results.

So you look at things like:

Sensor A

System B

Function C

Procedure D

You analyse each one, show that it behaves correctly, and then step back and say:

“Overall, this is safe.”

But real systems—especially modern aircraft—don’t behave like that.

They’re not neatly separated.

They’re tightly connected, constantly influencing each other.

And that’s where things start to get interesting.

Where interaction breaks the model

In reality, systems don’t fail in clean, single-threaded ways.

They fail through combinations of things that all seem reasonable on their own.

You might have something like:

a sensor giving slightly degraded data

the automation responding exactly as designed

the pilot reacting correctly to what they see

procedures being followed as written

Nothing is “wrong” in isolation.

If you looked at any one of those pieces, you’d probably sign it off as acceptable.

But put them together, and the aircraft can end up in a state that doesn’t match reality.

That’s the uncomfortable part.

The system is behaving correctly… and still drifting somewhere unsafe.

This is usually where safety cases start to lose their grip.

Not because the analysis was bad—

but because the space of interactions was bigger than anyone really captured.

The problem of system coupling

As systems evolve, they become more connected.

More data shared, more dependencies, more feedback loops.

Sensors don’t just feed one system anymore—they feed many

Automation relies on combined, “fused” data

Control laws depend on interpreted system state

Pilots rely on what the system is telling them

External inputs like ATC feed into the same picture

At that point, a small issue in one place doesn’t stay contained.

It moves.

It propagates across layers in ways that aren’t always obvious when you design the system.

And importantly—it doesn’t always move in a straight line.

That’s what makes it hard to reason about.

Why redundancy does not always protect the safety case

Redundancy is one of those things everyone leans on.

And for good reason—it works really well in simpler systems.

The idea is straightforward:

multiple independent sources

clear logic to decide which one to trust

But in more complex setups, something subtler happens.

You can end up with:

multiple signals that are all technically valid

disagreement between sources that are each “correct” in their own context

no obvious way to determine which one reflects reality

So instead of removing uncertainty, redundancy can sometimes spread it around.

Now the system isn’t missing information—it’s dealing with conflicting information.

And that’s a very different problem.

Human operators: the final interpretation layer

In most safety cases, the human is treated in a fairly clean way:

they follow procedures

they step in when something goes wrong

they act as the final decision-maker

But in practice, the human is doing something much harder.

They’re interpreting the system in real time, often under pressure, often with incomplete clarity.

And that interpretation depends on things like:

whether the system outputs are consistent

how much they trust what they’re seeing

how much time they have

how clearly the system is communicating its state

If everything lines up, this works well.

But if the system starts giving mixed signals, the human doesn’t see neat system boundaries.

They just see a confusing picture.

And that moment—where things stop making sense—is rarely modelled properly.

Where safety cases quietly degrade

If you look at where safety cases are strongest, it’s usually here:

normal operation

clear, single-failure scenarios

well-defined and well-understood faults

Where they start to struggle is in the grey areas:

multiple small things going wrong at once

systems that are degraded but still functioning

outputs that conflict but aren’t obviously wrong

transitions between modes where behaviour shifts

These situations are hard to fully list out in advance.

And more importantly, they’re not about single components.

They’re about how things interact.

The real failure mode: unmodelled interaction space

This is the bit that tends to get missed.

Not missing hazards—but missing combinations.

You get situations like:

System A behaving correctly with slightly degraded input from System B

System B behaving correctly but based on outdated input from System C

The human responding correctly to what A and B are showing

Every step checks out.

Individually, everything is defensible.

But the combination leads to the wrong overall picture.

And because that exact combination wasn’t explicitly considered, it slips through.

That’s where risk quietly builds up.

Why this matters in modern aviation systems

Modern aircraft aren’t just mechanical systems with backup components anymore.

They’re layered, interconnected systems:

distributed sensing

real-time computation

automation making continuous decisions

humans working inside that loop

external systems feeding into it all

So there’s a shift happening:

Safety isn’t just about whether individual parts work.

It’s about whether the whole system stays understandable when things start to degrade.

That’s a much harder problem.

And it’s not always something traditional safety cases fully capture.

Closing Thought

A safety case can be technically correct at every step.

Every hazard identified

Every failure mode analysed

Every mitigation justified

And still fall short in the real world.

Not because it was careless or incomplete in the usual sense—

but because the system it describes doesn’t behave as a set of isolated parts.

It behaves like a network.

Something that’s constantly interacting, constantly shifting.

And in that kind of system, safety isn’t just about preventing things from breaking.

It’s about keeping the overall picture coherent—especially when things start to drift away from normal.

Related Posts