Risk assessments are everywhere in aviation.

Before a change. After an incident. During design. During operations. During audits.

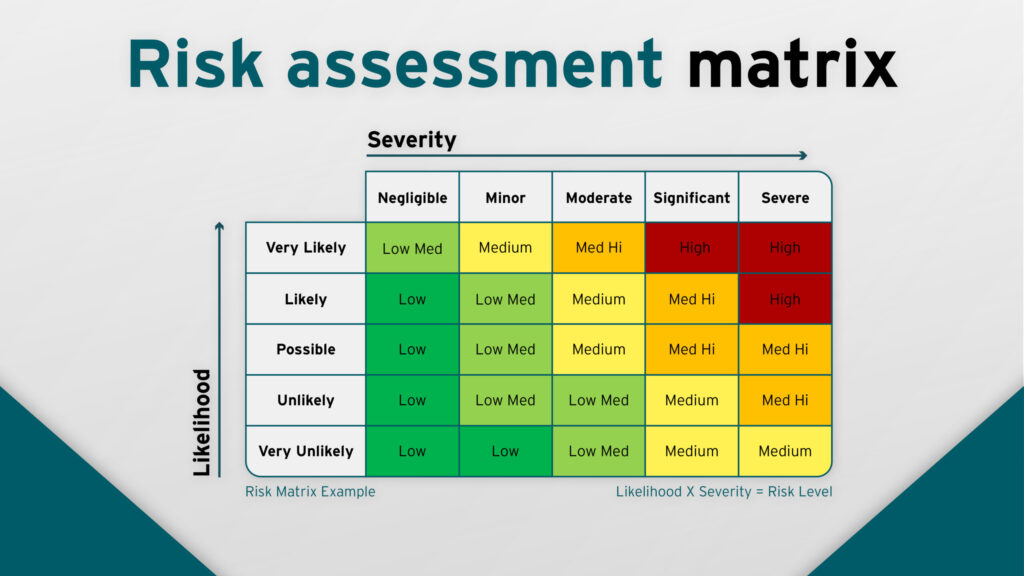

We fill in the tables. We assign severity and likelihood. We land somewhere in the matrix. Maybe we add a mitigation or two.

And then there’s this quiet, unspoken feeling:

“ok, we’ve assessed the risk—so we’re good.”

But that’s not really what just happened.

A risk assessment is just a snapshot of your understanding

At its core, a risk assessment is not a safety control.

It’s a statement.

More specifically:

a structured statement about how well you think you understand a situation.

That’s it.

It doesn’t:

- remove the hazard

- control the system

- guarantee outcomes

- or prevent drift

It just captures your current mental model—frozen in time.

The matrix feels objective… but it’s not

Severity × likelihood looks clean. Almost scientific.

But if you zoom in, both axes are full of judgement:

- How severe is “major” vs “hazardous”?

- What does “remote” actually mean in this context?

- Based on what data?

- Under what conditions?

Most of the time, teams converge on a number not because it’s correct, but because it’s defensible and familiar.

And that’s where things quietly shift from:

understanding risk

to

standardising how we describe it

The real risk is false confidence

The danger with risk assessments isn’t that they’re wrong.

It’s that they feel complete.

Once it’s documented, reviewed, and accepted, it starts to carry weight:

- “this has been assessed”

- “this risk is acceptable”

- “this is within tolerance”

But systems don’t care what box you selected.

They evolve:

- operational context changes

- workload shifts

- interfaces degrade

- assumptions get stretched

And the risk assessment doesn’t move with it.

Most assessments are built on stable assumptions

If you look closely, risk assessments tend to assume:

- procedures are followed as written

- system behaviour remains consistent

- humans respond as expected

- environment stays within normal bounds

But real operations are not built on stability.

They’re built on adaptation.

So over time, the system you assessed slowly becomes:

not quite the system you’re operating anymore

So what should a risk assessment actually do?

If it’s not a safety control, then what is it?

Used properly, it’s incredibly valuable—but in a different way.

A good risk assessment should:

- expose assumptions

- force clarity on system behaviour

- highlight uncertainty

- identify where margins are thin

- trigger deeper thinking, not close it off

It’s less about the number you land on…

…and more about the conversations you had to get there.

The subtle shift that makes it useful

The mindset shift is simple:

stop treating risk assessments as decisions, and start treating them as inputs.

They should feed into:

- design improvements

- operational constraints

- monitoring strategies

- training focus

- and ongoing review

Not act as the final word.

Final thought

Risk assessments don’t make systems safe.

They make systems legible—briefly.

And that’s useful.

But only if you remember that what you captured is:

- incomplete

- time-sensitive

- and dependent on assumptions that will drift

Because safety isn’t created in the moment you assign a risk rating.

It’s created in how the system behaves after that document is filed away.

Related Posts