There was a time when most aviation safety discussions were grounded firmly in the physical world, where structures, engines, and mechanical systems dominated the conversation, and where failure could be understood through deformation, fracture, or wear, all of which followed patterns that engineers had spent decades learning to predict and manage.

Software, by contrast, existed somewhat in the background, supporting these systems rather than defining them, and as a result, it did not initially attract the same level of structured assurance thinking.

That balance has shifted quite significantly.

In many modern aircraft, software is no longer simply supporting the system; it is the medium through which the system exists, interprets inputs, and produces outputs, and that introduces a different kind of challenge—one that is less about physical integrity and more about behavioural correctness.

It doesn’t fail in a way that is easy to reason about

One of the more subtle difficulties with software is that it does not degrade or exhibit warning signs in the way physical systems often do, and when it produces an incorrect outcome, it is usually doing exactly what it was designed to do, just not what was intended.

This distinction matters, because it shifts the problem away from detecting failure and toward ensuring correctness from the outset, which is a much less tangible objective.

Testing alone cannot fully solve this, because the absence of observed errors does not necessarily imply the absence of faults, particularly in complex systems with a large number of possible states and interactions.

Assurance, rather than strength, becomes the focus

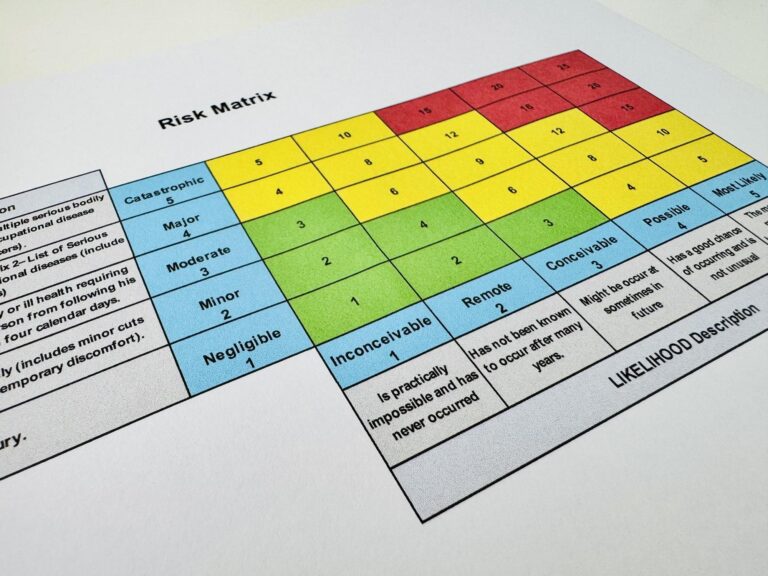

In this context, the question is no longer about how robust a component is in a physical sense, but rather about how much confidence is required that a given piece of software will behave correctly under all relevant conditions, including those that may not be explicitly anticipated during development.

This is where structured approaches such as DO-178C and the concept of Design Assurance Levels (DALs) begin to play a central role, not as abstract classifications, but as mechanisms for scaling the level of rigour applied to development and verification activities.

These levels are not intended to describe the software itself in isolation, but rather the consequences associated with its failure within the broader system.

And that connection does not originate in software

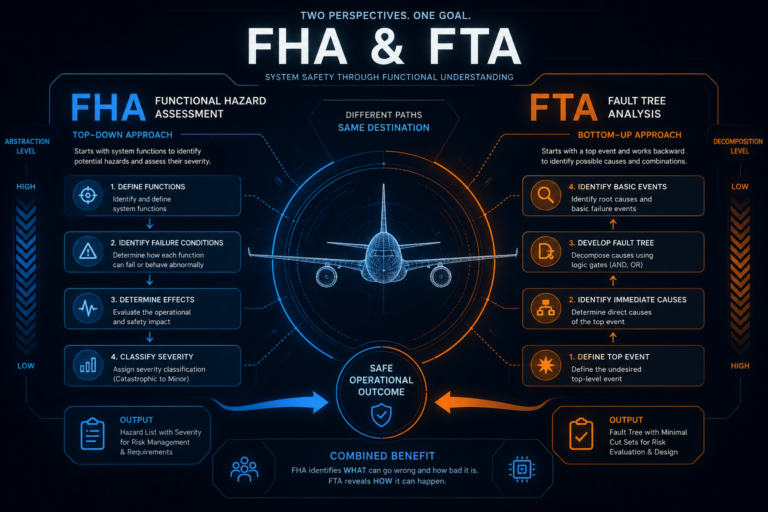

The assignment of assurance levels does not begin at the point where software is written, nor is it something that should be decided based on perceived importance or complexity of code, even though that is sometimes how it is informally approached.

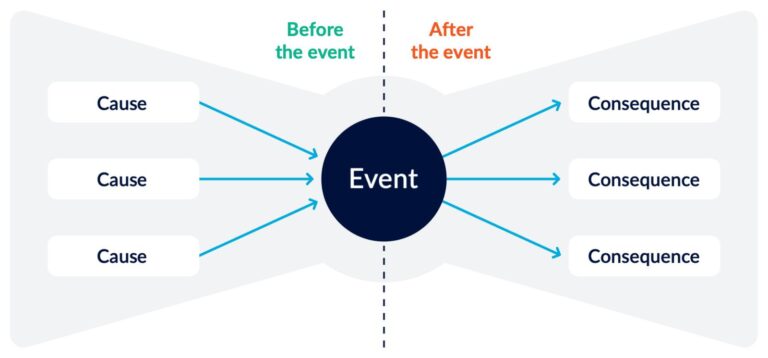

Instead, it traces back to an earlier and more fundamental question:

what happens if this function does not behave as intended?

That question is addressed through the Functional Hazard Analysis, which establishes the severity of potential outcomes at the aircraft or system level, and in doing so, provides the foundation upon which assurance levels are eventually built.

What flows down is not just a label, but an interpretation

When a DAL is assigned, what is often presented as a clean and structured outcome is, in reality, the result of several layers of reasoning that sit between the original hazard and the implementation of a specific function within software.

Between those two points, there are decisions about:

- how the system is decomposed

- how functions are allocated

- what assumptions are made about independence and mitigation

- and how different failure conditions interact

By the time a DAL is attached to a software component, it reflects not only the original hazard severity, but also the architecture and assumptions that have been introduced along the way.

Which means the process is not entirely deterministic

It is entirely possible, and not uncommon, for different teams to interpret the same FHA outcomes in slightly different ways, leading to variations in how assurance levels are allocated across systems, particularly in areas where architectural decisions influence the perceived need for independence or redundancy.

This does not necessarily indicate that one approach is correct and the other is not, but it does highlight that the process involves judgement, and that judgement is shaped by how well the system is understood.

The risk of reducing it to compliance

At some stage in most programmes, there is a tendency for assurance levels to become part of a procedural workflow, where the focus shifts toward ensuring that the correct documentation is in place and that the required activities have been completed for a given DAL.

While those aspects are clearly necessary, they can obscure the underlying purpose, which is to ensure that the level of confidence in the software is appropriate to the potential consequences of its failure.

When that purpose is lost, there is a risk that the process becomes about satisfying requirements rather than understanding risk.

A slightly different way to look at it

Rather than asking what assurance level a piece of software has been assigned, it can be more useful to consider what assumptions must hold true for that assignment to remain valid, particularly in relation to system architecture and the effectiveness of any mitigating features.

This perspective tends to bring attention back to:

- whether independence claims are justified

- whether failure containment is realistic

- and whether the system behaves as expected when those assumptions are challenged

Where other standards and frameworks fit

Depending on the context, additional standards and processes come into play, including system-level safety assessments and hardware assurance approaches, all of which contribute to the overall safety argument and influence how assurance is derived and justified.

While the details can vary, the underlying principle remains consistent, in that the level of assurance applied should be proportional to the potential impact of failure, and traceable back to clearly defined hazards.

Final thought

Software assurance levels are often presented as structured outputs of a well-defined process, but in practice, they are better understood as indicators of how a system interprets and manages risk, shaped by both the original hazard analysis and the architectural decisions that follow.

And when viewed in that way, they can provide insight not just into the software itself, but into the assumptions, trade-offs, and areas of uncertainty that exist within the system as a whole.

Related Posts