Mapping System Intent to Failure States

Functional Hazard Assessment (FHA) sounds formal, but at its core it’s actually a very simple idea.

You’re just asking:

“What is this system supposed to do… and what happens if it doesn’t do that properly?”

That’s it.

Everything else is just structure built around that question.

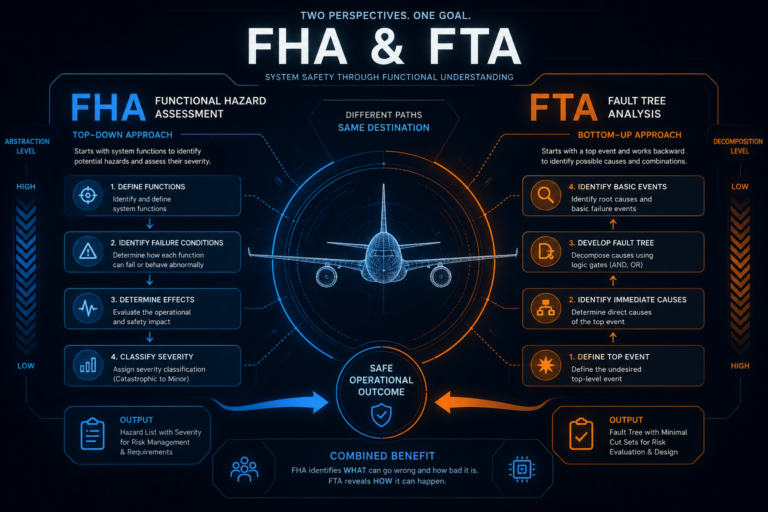

Where FHA actually sits in the thinking process

FHA sits right at the beginning of system safety thinking.

Not when the system is fully designed.

Not when you’re testing hardware.

But when you’re still trying to understand what the system even is.

So instead of thinking in terms of components, you step back and think in terms of functions.

For example:

Maintain altitude

Provide airspeed information

Control aircraft pitch

Detect terrain

You’re not asking how it does those things yet.

You’re only asking what it is supposed to achieve.

That shift is more important than it sounds.

Because once you define function properly, everything else follows from it.

Step 1: Start with intent, not hardware

A common mistake—especially early on—is jumping straight into components.

Pitot tubes, sensors, computers, software modules.

But FHA deliberately avoids that.

You don’t start with:

“This system has a sensor”

You start with:

“This system provides airspeed information to the pilot and flight systems”

Same thing in reality—but a completely different level of thinking.

Because now you’re focused on behaviour, not implementation.

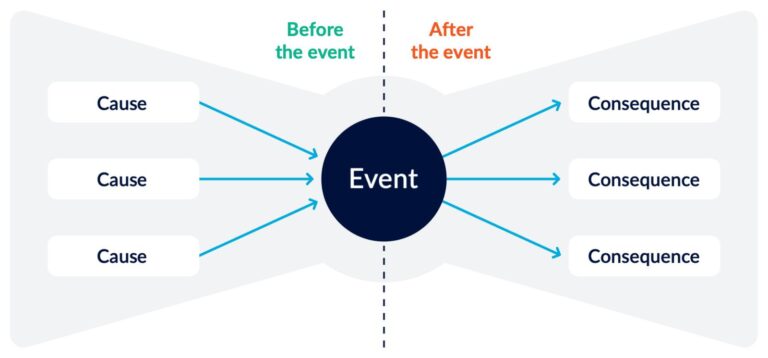

Step 2: Ask how the function can fail

Once you have the function, you start exploring what “bad” looks like.

Not in a binary way—but in different modes of failure.

A function can fail by:

not working at all

working partially

working incorrectly

working inconsistently

So using airspeed again, you might end up with:

no airspeed available

airspeed too high

airspeed too low

unstable or fluctuating readings

Notice something important here:

we’re not saying why it failed yet.

We’re just describing what the system looks like when it’s wrong.

That separation matters later when you move into fault analysis.

Step 3: Think about what that failure actually does in flight

This is where FHA becomes more than just a list.

You start asking:

“So what does this actually mean for the aircraft?”

Because a failure doesn’t exist in isolation.

It exists inside a flying system with pilots, automation, and physics all interacting.

For example:

If airspeed is too high → pilots may reduce thrust or pitch down

If airspeed is too low → aircraft may approach stall conditions

If airspeed is unstable → automation and pilot inputs may conflict

So now you’re no longer just describing failure.

You’re describing behaviour under failure.

That’s the real point of FHA.

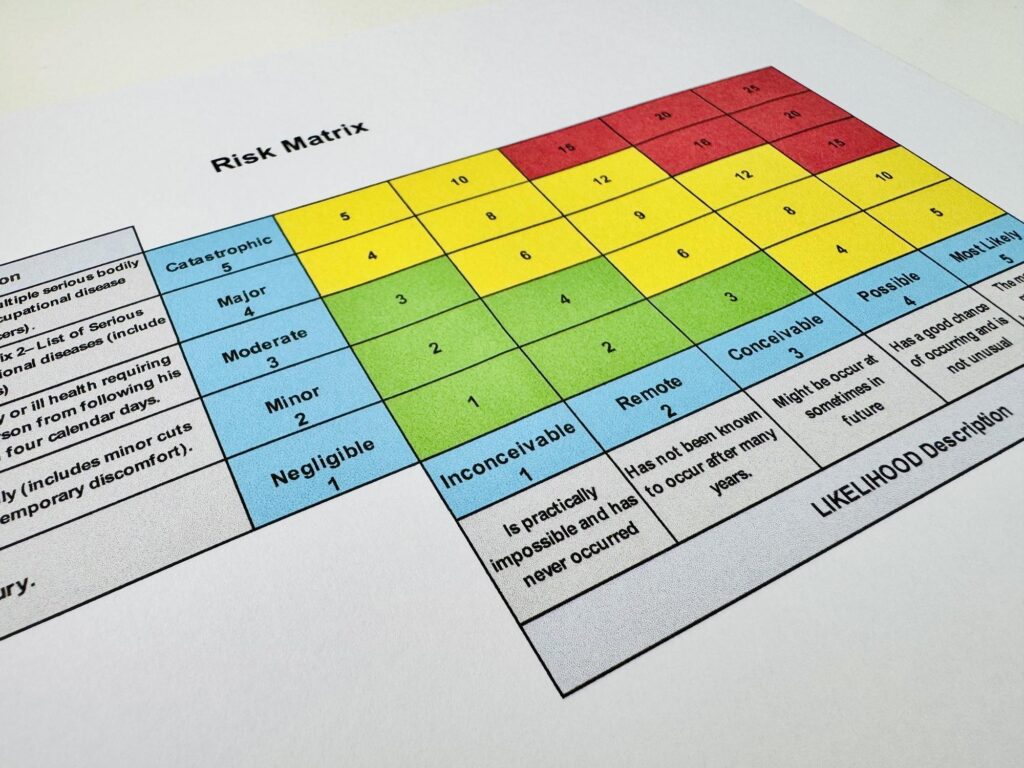

Step 4: Assign severity (but don’t overthink it yet)

Once you understand impact, you classify it:

Catastrophic

Hazardous

Major

Minor

No safety effect

This part often gets treated like bureaucracy.

But it’s actually doing something important:

it tells you where to focus engineering effort.

Not all failures are equal.

And FHA is where you first make that distinction.

The key idea people miss about FHA

FHA is not about finding problems in hardware.

It’s about understanding what it means for a function to be wrong.

That sounds subtle, but it changes how you think about everything downstream.

Because once you define:

“What happens if this function is wrong?”

you’ve already set the boundaries for:

design decisions

redundancy requirements

fault analysis (FTA, FMEA, etc.)

certification expectations

FHA is basically the “translation layer” between system intent and system risk.

Where things get interesting in real systems

In simple systems, FHA feels clean.

One function, one failure, one effect.

But in real aircraft systems, things get messier.

Because functions aren’t isolated.

They interact.

Air data affects automation

Automation affects pilot perception

Pilot actions feed back into system state

Sensors feed multiple subsystems at once

So even at the FHA level, you start seeing something important:

failures don’t stay local.

They propagate through interpretation layers.

A more honest way to think about FHA

If you strip away the formal language, FHA is really just this:

You’re mapping how trust in system functions breaks down.

Because every function in an aircraft is really a promise:

“This system will give you correct information”

“This system will maintain stability”

“This system will behave predictably”

FHA is just asking:

“What happens when that promise is no longer reliable?”

Why FHA matters in modern aviation

In older systems, you could often isolate problems.

A part failed → you replaced it → system recovered.

In modern systems, that’s not how failure behaves anymore.

Because functions are distributed across software, sensors, and human interpretation.

So when a function fails now, it doesn’t just stop.

It degrades understanding across the system.

And that’s where FHA becomes really important.

It forces you to think in terms of:

system behaviour under degraded trust

not just broken components.

Closing Thought

FHA is often treated like an early checklist in safety engineering.

But if you really use it properly, it’s something more fundamental.

It’s the first time you stop thinking:

“What is this system made of?”

and start thinking:

“What does it mean when this system can no longer be trusted to do what it says it does?”

Because in modern aviation systems, that’s usually where risk actually lives.

Not in total failure.

But in partial, believable, and still-functioning failure modes where the system still works… just not in the way you think it does.

Related Posts