When people hear the word safety, they often think of something absolute and almost binary in nature, as if a system is either completely safe or fundamentally unsafe with no meaningful space in between those two states.

But in engineering—especially in fields like aviation or complex system design—that idea doesn’t really hold up in practice, because the moment you start dealing with real-world operations, uncertainty, human interaction, and environmental variability, you quickly realise that “perfect safety” is not only unrealistic but actually not even a useful design target.

So the real question that engineers end up working with is not “how do we make this completely safe?”, but instead something much more grounded and practical, which is “how safe is safe enough for this system to operate in the real world while still being useful?”

Safety isn’t an absolute—it’s a balance

Every engineered system effectively exists in a trade space where different objectives are constantly competing with each other, and safety is just one of those objectives sitting alongside things like performance, cost, complexity, maintainability, and overall operational usefulness.

The important thing to understand is that you can’t maximise all of these at the same time, because if you push safety to an extreme where every possible risk is eliminated regardless of cost or complexity, you usually end up with a system that is so restricted, over-engineered, or operationally limited that it becomes impractical or even unusable in real-world conditions.

So instead of chasing an ideal of “zero risk,” what engineers actually aim for is a level of risk that is controlled, understood, and reduced to a point where it is considered acceptable within the context of how the system will actually be used.

What “acceptably safe” actually means

The phrase “acceptably safe” often gets misunderstood, because it doesn’t mean “good enough” in a casual sense, and it definitely doesn’t mean that risks are ignored or taken lightly, but instead it refers to a very deliberate engineering and regulatory judgement that the remaining risk in a system has been identified, analysed, reduced where reasonably possible, and then agreed to be tolerable within a defined operational context.

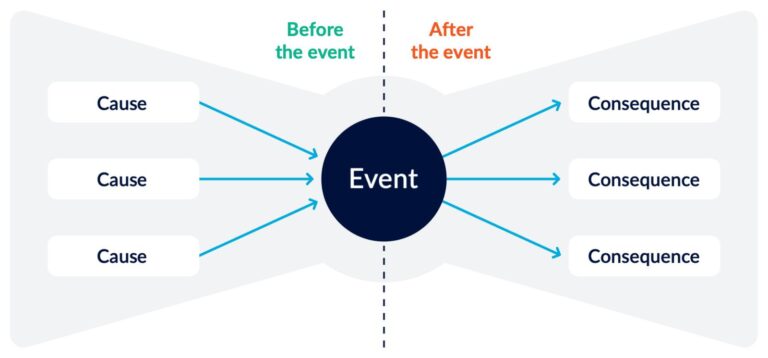

In other words, we fully accept that things can still go wrong, but we ensure that we understand how they can go wrong, how likely that is, what the consequences would be, and what barriers exist to prevent those consequences from escalating into something unacceptable.

So safety in this sense is not about eliminating uncertainty, but about making sure uncertainty is bounded, visible, and managed in a way that is compatible with real-world operation.

Why zero risk is not the goal

It might feel counterintuitive at first, but aiming for zero risk is not actually a meaningful engineering objective, because the closer you try to push a system toward eliminating every conceivable risk, the more you end up introducing other problems such as excessive complexity, operational constraints, reduced flexibility, or even new types of failure modes that arise from over-constrained design decisions.

For example, adding layers of redundancy everywhere might sound like an obvious safety improvement, but in practice it can introduce ambiguity when those redundant elements disagree, while overly conservative design margins can push systems into inefficient or even unsafe operating regions during edge cases that weren’t fully anticipated.

So instead of thinking in terms of “remove all risk,” modern safety engineering tends to focus on controlling risk in a way that preserves system usability while keeping failure outcomes within acceptable and recoverable bounds.

The idea of “risk you can live with”

At some point in every safety-driven design process, there is a necessary shift in thinking where engineers and regulators have to accept that uncertainty can never be fully eliminated, and therefore the goal becomes not perfection, but controllability.

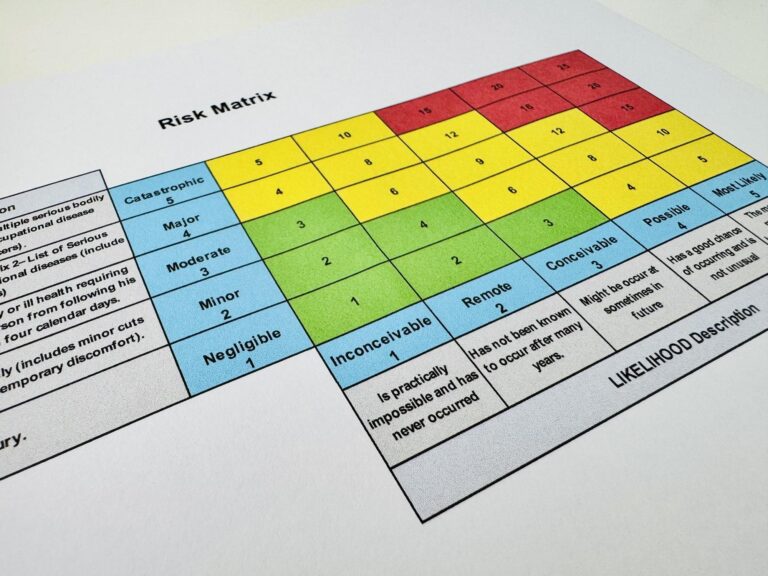

This leads to a more practical question, which is not “is this safe in an absolute sense?”, but rather “is the remaining risk acceptable given the severity of potential outcomes, the likelihood of occurrence, and the system’s ability to handle degraded conditions in real operation?”

That judgement is not purely technical either, because it depends heavily on context, including how the system will be used, how often it is exposed to risk, what the consequences of failure are, and what level of performance society is willing to accept in exchange for that risk level.

Safety in real systems is about trade-offs, not absolutes

One of the biggest mindset shifts in engineering safety is recognising that safety is not a fixed property that a system either has or does not have, but rather the outcome of a continuous set of trade-offs made across design, operation, regulation, and human interaction with the system.

For instance, increasing automation might improve safety in routine conditions by reducing workload and human error, but at the same time it can introduce new risks related to reduced situational awareness or hidden system dependencies, while a more conservative system design might behave more predictably under normal conditions but respond less effectively in unusual or unexpected scenarios.

So what we call “safe” is always the result of balancing competing pressures rather than achieving an absolute state of correctness.

Why this matters in aviation specifically

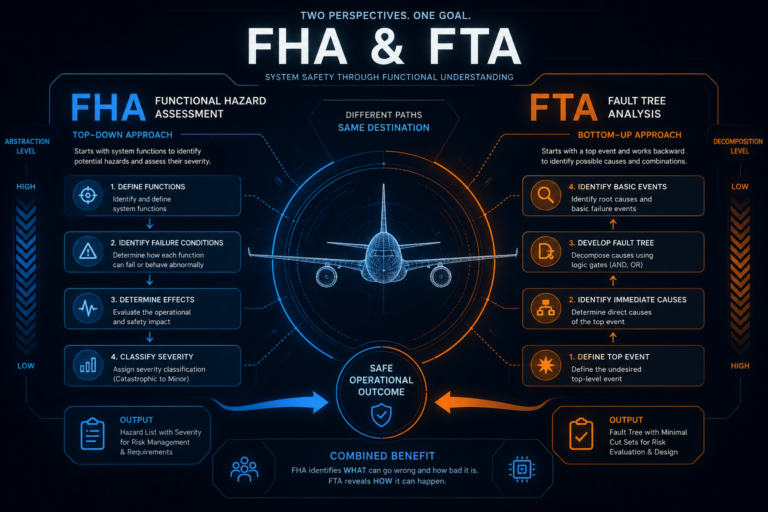

Aviation is often described as one of the safest forms of transport, and that is true, but that level of safety does not come from eliminating all possible failure modes, because that would be impossible, but instead comes from deeply understanding how systems fail, designing layers of protection that prevent those failures from escalating, and ensuring that when something does go wrong, it remains contained and recoverable.

So safety in aviation is less about preventing failure entirely and more about ensuring that failure does not cascade into loss of control or loss of the system as a whole.

The uncomfortable but important truth

A completely safe system is not just unrealistic, but also fundamentally incompatible with systems that are expected to do anything useful in the real world, because the moment you demand absolute safety, you inevitably remove flexibility, performance, and adaptability to such an extent that the system can no longer operate effectively in its intended environment.

So instead, we accept a more grounded reality, which is that failure will always exist in some form, and the real engineering question becomes whether that failure stays within known, understood, and acceptable boundaries when it inevitably occurs.

Closing Thought

Safety is not a finish line that you eventually cross and declare complete, but rather an ongoing judgement about balance between risk, performance, and usability under real-world constraints, where the goal is not perfection but controlled imperfection that still allows the system to function reliably within acceptable limits.

And once you start looking at it this way, safety stops being about eliminating all possibility of failure, and instead becomes about designing systems that remain understandable, predictable, and controllable even when they are operating under stress, uncertainty, or degradation.

Related Posts